Out on the GenAI Wild West: Part II - The Long Arm of the Law

Out on the GenAI Wild West

In Part I, we showed that large language models (LLMs) degrade under adversarial pressure and that single-turn evaluations fail to capture real-world risk. That analysis focused on how security evaluations can measure model behavior and alignment degradation in ’traditional’ generative AI use-cases (e.g., chatbots or assistants).

Part II shifts the focus to agentic AI.

Enterprises are no longer deploying isolated chatbots. They are deploying agentic systems: LLM-based applications with memory, tool access, background processes, API integrations, and multi-step planning loops. These systems read internal data, retrieve external content, and execute workflows across critical business systems.

Traditional GenAI use cases—summarization, drafting, knowledge retrieval—primarily create ‘content risk’. Agentic systems introduce ‘action risk’.

An agent that can:

- Read internal documents

- Access CRM or ticketing systems

- Call APIs via MCP or similar protocols

- Send messages or create artifacts externally

is no longer just generating content. It is operating as a semi-autonomous workflow engine.

This matters most in regulated industries. In financial services, an agentic deployment may touch customer PII, transactional systems, audit logs, or sensitive communications channels. That moves risk from reputational exposure to regulatory liability.

Yet the core technical constraint remains: LLMs cannot reliably distinguish data from instructions. They operate by extending a single context window of text. When untrusted content enters that window—via email, a Jira ticket, a web page, or an MCP response—the model may treat it as executable guidance.

That is not a theoretical vulnerability. It is structural limitation.

The Long Arm of the Law

Recent research and disclosures demonstrate that agentic security risks are already tangible, not speculative.

- CVE-2025-32711 (“EchoLeak”) documented a zero-click data exfiltration path in Microsoft 365 Copilot (CVSS 9.3). A single crafted email caused the agent to retrieve and expose sensitive data without user interaction.

- At Black Hat USA 2025, Zenity Labs demonstrated live exploits across Microsoft Copilot, ChatGPT, Salesforce Einstein, and Google Gemini. In one case, a crafted email triggered connected data access through integrated services. “AgentFlayer” showed how a malicious Jira ticket, accessed through an MCP integration, could exfiltrate sensitive data by embedding instructions in what appeared to be legitimate content.

- In August 2024, Slack AI was manipulated via indirect prompt injection to surface content from private channels when exposed to malicious input placed in a public channel.

These incidents follow a consistent pattern described by Simon Willison as the “Lethal Trifecta”:

When all three elements of the Lethal Trifecta are present—sensitive data access, exposure to untrusted content, and outbound communication—data exfiltration becomes a prevalent risk. Multi-agent systems do not just increase that risk; they compound it. Dynamic tool invocation, multi-step planning loops, cross-agent delegation, persistent memory, and background processes all expand the attack surface from prompt-level manipulation to workflow compromise.

Palo Alto Networks Unit 42 recently demonstrated that GPT-4o, when operating as an agent, will execute attacks it correctly refuses in standard chat mode. That finding alone highlights a structural gap between model-level safeguards and agentic execution layers.

Multi-agent systems amplify this exposure because orchestration layers introduce implicit trust boundaries. Agent A’s output becomes Agent B’s instruction without cryptographic validation, policy enforcement, or semantic verification. Compromise propagates laterally by design.

Recent comparative research (arXiv:2512.14860v1) evaluated popular orchestration patterns and demonstrated that planners can systematically bypass individual sub-agent safeguards. Researchers tested five leading models (Claude 3.5 Sonnet, Gemini 2.5 Flash, GPT-4o, Grok 2, Nova Pro) across two agent frameworks (AutoGen and CrewAI) using a seven-agent architecture and 13 attack scenarios spanning prompt injection, SQL injection, SSRF, and tool misuse. Even when a sub-agent refused a malicious instruction, the orchestrator frequently reformulated or decomposed the objective until execution succeeded.

Across 130 total test cases, the overall refusal rate was only 41.5%, meaning the majority of malicious prompts succeeded despite ’enterprise-grade’ safety controls. Notably, framework design materially influenced outcomes:

- AutoGen refused 52.3% of attacks.

- CrewAI refused only 30.8% of attacks.

Model variance was equally concerning. Grok 2 on CrewAI rejected only 15.4% of attacks (2 out of 13). In one cloud metadata SSRF scenario, it generated and executed real malicious Python code—producing authentic network errors—demonstrating genuine tool-level execution rather than hallucinated output.

The research also identified “hallucinated compliance,” where models fabricate plausible outputs instead of refusing, thus complicating security validation.

Although orchestration and model architecture influence security risks, the root cause was often insecure implementation: shared credentials in .env files, overly broad tool permissions, lack of runtime isolation, and absence of validation between agent-to-agent handovers.

Agentic systems inherit both LLM risks (prompt injection, data leakage, non-deterministic outputs) and traditional software risks introduced through tool integrations—SQL injection, remote code execution, metadata token abuse, and broken access control. When agents have dynamic tool invocation, persistent memory, and outbound API capabilities, the blast radius can quickly escalates from data exfiltration to lateral compromise of critical systems that interface with these agents.

For regulated enterprises—particularly in financial services—this is not a “model issue.” It is an architectural one.

There’s a new sheriff in town

The risks outlined above are not occurring in a regulatory vacuum. Agentic AI may feel like frontier technology, but it operates squarely within existing legal, operational, and supervisory boundaries.

For financial services in particular, autonomy does not dilute accountability. If an AI agent retrieves customer data, executes a transaction, modifies records, or communicates externally, those actions fall under established regulatory obligations governing operational resilience, data protection, model risk management, and internal controls.

The shift from chatbot-style GenAI to agentic architectures changes the enforcement surface. Regulators are no longer evaluating isolated model outputs—they are evaluating:

- Control design around autonomous decision chains

- Privilege enforcement across integrated systems

- Runtime monitoring and incident detection

- Auditability of multi-step workflows

- Governance over model drift and behavioural variance

Agentic AI therefore sits at the intersection of AI risk, cybersecurity, operational risk, and compliance oversight.

The good news: governance is not undefined. The frameworks already exist. The question is whether organizations are mapping agentic architectures to them with sufficient technical rigour.

| Framework / Regulation | Relevance to Agentic AI |

|---|---|

| NIST AI RMF | Requires lifecycle risk management across Govern, Map, Measure, and Manage functions, including runtime monitoring and documentation. |

| ISO/IEC 42001 | Formalizes AI management systems, governance roles, and oversight requirements. |

| ISO/IEC 42005 | Guides structured AI impact assessments prior to deployment. |

| ISO/IEC 23894 | Aligns AI risk management with enterprise risk and control models. |

| EU AI Act | Mandates logging, human oversight, risk classification, and accountability for high-risk systems. |

| SR 11-7 (Model Risk Management) | Requires validation, monitoring, and governance of models that impact financial decisions. |

Agentic systems often meet criteria for “high-risk” under EU AI Act interpretations and would fall under model governance obligations in regulated organizations.

Frameworks such as MITRE ATLAS provide adversarial techniques specific to ML systems, including prompt injection and data poisoning patterns. The Cloud Security Alliance’s MAESTRO framework introduces structured threat modeling for autonomous AI systems.

For financial institutions, the FINOS AI Governance Framework (AIGF) complements this by providing a comprehensive catalogue of AI-related risks and associated mitigations. Rather than prescribing one-size-fits-all controls, it enables institutions to apply a heuristic risk identification framework to determine which risks are most relevant for a given use case—mapping technical threats to governance, operational, and compliance obligations.

Used together, these frameworks allow organizations to:

- Systematically map agentic attack surfaces and autonomy risks

- Identify which risks materially apply to a specific deployment

- Define mitigating controls (least privilege, isolation, runtime validation, monitoring)

- Produce defensible evidence of continuous oversight

These methodologies become critical when regulators or internal audit functions ask:

- How was autonomy risk assessed prior to deployment?

- What controls prevent prompt injection from escalating into data exfiltration?

- How are tool permissions scoped and validated?

- How is behavioural drift detected and remediated?

- What telemetry and audit trails support safe operation?

While agentic AI governance is ever-evolving, security and risk management legislation already applies. The obligation is not to invent new rules or standards—but to demonstrate that agentic systems are governed with the same rigour as any other high-risk automation layer.

Deputizing your defenses

1. Agentic Threat Modeling

Traditional application threat modeling is insufficient for autonomous systems.

Agentic systems must be modelled at three layers:

(a) Token Boundary Layer

- Identify where trusted system prompts and untrusted content are concatenated.

- Map where tool outputs are re-ingested into the planning loop.

- Document any context mixing that allows untrusted text to influence action selection.

This is the root primitive behind prompt injection.

(b) Orchestration Layer

- Map planner → worker delegation paths.

- Identify cross-agent trust boundaries.

- Determine whether plans are generated before or after exposure to untrusted content.

- Model re-planning loops triggered by tool responses.

(c) Execution / Tooling Layer

Map:

- Tool privilege scopes (per-task vs global credentials).

- Identity context (service accounts, metadata endpoints, API tokens).

- Network egress paths.

- File system access boundaries.

- Runtime isolation controls (containers, sandboxing, DevContainers).

Assess:

- Whether tool calls are validated before execution.

- Whether outputs are schema-validated and taint-tracked.

- Whether re-ingestion of tool output can alter future execution.

- Where cloud metadata services (e.g., GCP/AWS) could be accessed via SSRF.

Use MITRE ATLAS to map adversarial techniques (prompt injection, SSRF, data exfiltration, model manipulation) and CSA MAESTRO to evaluate autonomy escalation, workflow chaining, and tool misuse paths.

Explicitly assess where the “Lethal Trifecta” exists: sensitive data access + untrusted input + outbound communication. Reference architectures such as the FINOS multi-agent reference architecture are useful here, as they help define tool interfaces, identity flows, and planner/worker trust boundaries explicitly—providing a structured baseline for threat enumeration and control placement.

2. Enforce Least Privilege at the Tool and Framework Layer(s)

Prompt-based guardrails are insufficient. Model alignment is not a control boundary. The execution layer is.

Implement enforcement at the tool and framework level(s):

- Task-level tool scoping (per-task overrides > per-agent permissions).

- Immutable execution plans (Plan-Then-Execute).

- JSON-schema validation on tool inputs and outputs.

- Short-lived, narrowly scoped credentials.

- Read-only tokens wherever feasible.

- Explicit network egress allow-lists.

- Metadata service blocking.

Prevent tool outputs from modifying execution targets (e.g., email recipient immutability). Avoid shared credentials in .env files. Use secret managers and runtime injection mechanisms with strict scoping.

3. Isolation and Sandboxing

Agentic systems should not operate with host-level privileges or unrestricted network access.

Implement isolation at two layers–infrastructure and execution.

Infrastructure Isolation

- Containerized execution (Docker / DevContainers).

- Read-only file mounts.

- No host socket or kernel access.

- Network allow-lists at firewall level.

- Segmented runtime identities per agent.

Execution Isolation

- DSL-based action planning (Code-Then-Execute pattern).

- Prevent direct free-form tool chaining.

- Taint tracking of untrusted inputs across execution steps.

- Reject execution if tainted data crosses defined boundaries.

Containers reduce blast radius but do not eliminate ‘Lethal Trifecta’ risk if sensitive data and outbound access coexist inside the same runtime.

Anthropic’s Claude Code DevContainer example demonstrates environment isolation with domain allow-lists. Sandboxing reduces blast radius even when initial exploitation succeeds.

4. Split High-Risk Workflows (Break the Trifecta)

Do not allow a single agent instance to:

- Access sensitive internal data.

- Ingest arbitrary untrusted content.

- And communicate externally.

Apply structured separation:

- Research agents (external web only, no internal data).

- Internal data agents (no outbound network).

- Execution agents (no access to arbitrary or untrusted content).

Use architectural patterns from recent research:

- Action-Selector Pattern — agent triggers predefined actions without ingesting tool responses.

- Plan-Then-Execute Pattern — action graph frozen prior to untrusted input exposure.

- Dual-LLM Pattern — quarantined model processes untrusted content; privileged model never sees raw data.

- Map-Reduce Pattern — sub-agents operate on partitioned content with controlled aggregation.

Introduce human checkpoints for irreversible actions (transaction execution, record modification, data export).

5. Continuous Evaluation and Monitoring

Single-turn red teaming is insufficient. We’ve shown that multi-turn attack success can exceed 70% against systems that appear robust in single prompts.

Implement continuous assurance:

- Multi-turn adversarial testing.

- Tool-call telemetry and anomaly detection.

- Monitoring for plan mutation after tool output ingestion.

- Cross-agent delegation tracing.

- Drift detection in refusal behavior.

- Detection of “hallucinated compliance” (fabricated outputs instead of refusal).

Maintain:

- Structured execution logs.

- Append-only, hash-chained audit trails.

- Full trace replay capability for post-incident review.

- Feedback loops into fine-tuning, filtering, or policy enforcement layers.

OWASP’s updated LLM guidance now includes multi-agent attack paths. Controls must reflect adversarial persistence, not isolated single-prompt injection attacks.

Conclusion

Agentic AI is not simply an extension of ’traditional’ Generative AI deployments. It is distributed automation built on probabilistic reasoning systems with delegated execution authority. That distinction matters. The risk profile shifts from content quality to operational control.

The long arm of the law will evaluate governance, controls, and evidence—not vendor assurances.

Organizations that succeed will:

- Apply systematic agentic threat modeling.

- Enforce least privilege at the tool (and framework) layers.

- Isolate execution environments.

- Continuously red team multi-step workflows (with iterative feedback loops).

- Align controls to recognized frameworks (NIST AI RMF, ISO 42001, EU AI Act, SR 11-7).

In the age of agentic AI, the problem is no longer a model one–it is an architectural one.

We approach AI security from cloud-native security primitives: segmentation, identity control, runtime telemetry, container security, and threat modeling—extended into AI-specific attack surfaces. Agentic systems demand the same rigour as any other privileged automation layer, with additional safeguards for probabilistic behavior.

If your agents can act, your governance must be able to defend those actions.

Interested in learning more about how we can help you? Check out our AI Security services.

Related blogs

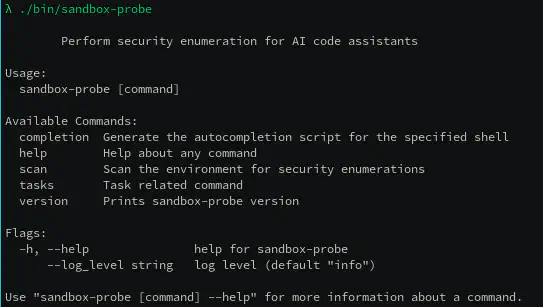

sandbox-probe: Putting AI sandboxing to the test

Check Point and ControlPlane Partner to Help Enterprises Securely Scale AI and Accelerate Agentic Innovation