Conference Recap: ControlPlane at KubeCon EU '23

- Open Source Releases

- Cloud Native Capture-the-Flag Competition

- Book Signings

- Talks

- Back to the Future: Next-Generation Cloud Native Security - Matt Jarvis, Snyk & Andrew Martin, ControlPlane

- Automated Cloud-Native Incident Response with Kubernetes and Service Mesh - Matt Turner, Tetrate & Francesco Beltramini, ControlPlane

- InSPIREing Progress: How We’re Growing SPIFFE and SPIRE in 2023 and Beyond - Daniel Feldman, Hewlett Packard Enterprise & Andrés Vega, ControlPlane

- Hacking and Defending Kubernetes Clusters: We’ll Do It LIVE!!! - Fabian Kammel & James Cleverley-Prance, ControlPlane

- What Can Go Wrong When You Trust Nobody? Threat Modeling Zero Trust - James Callaghan & Ric Featherstone, ControlPlane

Open Source Releases

This year at KubeCon we have the pleasure of open sourcing a variety of cloud native security tooling, representing thousands of hours of thought and build time! Find out more here:

- Netassert v2: a security testing framework for fast, safe iteration on firewall, routing, and NACL rules for Kubernetes and non-containerised hosts

- Collie: NIST 800-53r5 compliant OSCAL, Kyverno, Crossplane and Lula automated compliance validation

- Threat Modelling Zero Trust: What Can Go Wrong When You Trust Nobody? Threat Modeling Zero Trust

- Argo CD End User Threat Model: Security considerations for hardening Declarative GitOps CD on Kubernetes, written for the CNCF and Intuit

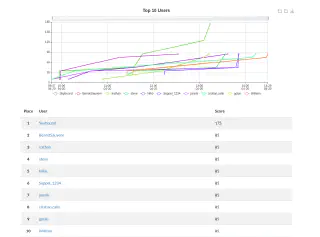

Cloud Native Capture-the-Flag Competition

Participants were encouraged to use their technical knowledge to exploit Kubernetes and associated tools in the cloud native ecosystem. The aim, as always, was for players to walk away having acquired know-how and valuable experience dynamically interacting with Kubernetes in a non-traditional manner by hacking the underlying components.

Across three scenarios of increasing difficulty, participants flexed their offensive security muscles to defeat ControlPlane’s eternal foe, Captain Hλ$ħ𝔍Ⱥ¢k. Ultimately, the player codenamed “Skybound” emerged victorious with 175 points across the three scenarios, earning a lifetime of hacker street cred - or at least until the next KubeCon!

Book Signings

Get the first half of Hacking Kubernetes for free here!

Talks

We were lucky to have over 10% of ControlPlane speaking this year, with 20% of the organisation directly supporting on remote infrastructure for the CTF, swag giveaways at the booth, and meeting with customers new and old in our live Threat Room.

Here’s the rundown of the talks, if you’d like your own threat model, please get in touch.

Back to the Future: Next-Generation Cloud Native Security - Matt Jarvis, Snyk & Andrew Martin, ControlPlane

Bright and early on Thursday, Andrew and Matt treated us to their predictions for the world of cloud native security in the years to come. A decade ago, Andrew and Matt were bootstrapping on-prem private clouds without Kubernetes or Docker. The pace of change from then to now has been remarkable, and is only increasing. Following a light-speed history of cloud native, the future of process isolation, build processes, hardware, linux & the kernel, keys & cryptography and AI / LLM were covered in a thirty minute tour de force, revolving around the need for trust at every level.

WebAssembly, Unikernels and Kata containers are the future of process isolation, but only Kata containers are being used for hardcore production use cases so far. Build processes not only need to be reproducible, where what we build is bit-for-bit identical every time given the same input, but also bootstrappable, meaning that the entire pipeline itself is built and verified at build time. Even the hardware needs to be verifiable, which is hard when most hardware currently is notoriously proprietary. Enter open source silicon projects like OpenRISC and opentitan. New computer architectures are being worked on like CHERI, aiming to update programming paradigms which have changed little for 30 years.

Moving onto all things Linux, eBPF is bringing security, observability and performance benefits in the form of a wide range of in-kernel programs. Some of these are written in the Rust programming language, which is starting to be seen more often in the Linux kernel due to its memory safety and performance. Confidential computing is another hot area right now, with AWS Nitro Enclave, bringing previously unseen functionality into cloud environments. Homomorphic encryption and ways to run trusted workloads on untrusted compute are also being actively researched and developed.

Public key cryptography has served us well for 30 years, but the rapid development of quantum computers is a potential game-changer. The ability for quantum computers to crack problems like prime factorisation (using Shor’s algorithm) in a fraction of the time of classical computers means there is a need to develop “post-quantum” cryptography to replace the existing algorithms like RSA and Diffie-Hellman and we will need to migrate our keys and infrastructure to the new algorithms when this process is complete.

Finally, the hottest of hot topics at the moment, Artificial Intelligence and Large Language Models are proving to be truly transformational for how we interact with computers, how we write applications and how we defend them. Alongside the creation of new job descriptions (e.g. Prompt Engineering), the exponential developments in AI and ML could have offensive implications through automating discovery of novel exploits and chaining them together to get deep into a target’s infrastructure. On the flip-side, there will also be rapid growth in the field of automated remediation and response (see the below talk), thereby fighting fire with fire.

As the security landscape becomes exponentially more complex, the principles remain the same. It all comes down to trust, there’s no such thing as secure software, and training the next generation has never been more important.

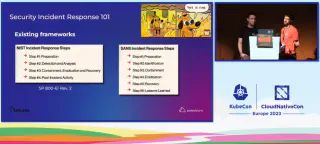

Automated Cloud-Native Incident Response with Kubernetes and Service Mesh - Matt Turner, Tetrate & Francesco Beltramini, ControlPlane

Cloud native application patterns introduce challenges to traditional Incident Response teams, processes and tools, such as volatility / scaling, how to fit IR into DevOps workflows, and a knowledge gap when it comes to the rapidly changing technologies in use. However, increased automation, advanced platform capabilities and GitOps-style continuous delivery can actually be leveraged to respond faster and more effectively to incidents.

Taking an intelligence-driven approach to defence, and focusing on stopping the attacker’s attack (kill chain) in its tracks is key to modern IR. Francesco and Matt demonstrate an innovative way to use native Kubernetes and Istio features to mark a workload as compromised and apply strong, granular controls to contain it for forensic analysis, preventing the attack from spreading while reducing any impact to service levels. This all starts with high quality threat intelligence data and Indicators of Compromise, and relies on logs being sent from all workloads to the SIEM. When an IoC is detected, the SOAR can run an automated response to remove the compromised workload from its deployment, block all east-west network traffic and reschedule non-compromised workloads onto a hardened container runtime.

Now that the compromised workload is constrained, and the possibility of lateral movement is eliminated, analysis can begin. First, take a check-point of the container to maintain integrity of evidence. By attaching an ephemeral debug container, forensic tools can be used to confirm whether it is a true positive, and harvest more IoCs for use in future detection. Workloads may be contained further by reconfiguring the relevant firewalls (including WAFs) to block similar attacks in future. “Definitely-compromised” pods can be deleted, and all others can be restarted. Kubernetes natively handles the typical recovery stage of an IR engagement, so more time can be spent on post-attack analysis and increasing automation e.g. using Operators such as the one Matt wrote to more elegantly carry out the constraint, containment and eradication stages.

InSPIREing Progress: How We’re Growing SPIFFE and SPIRE in 2023 and Beyond - Daniel Feldman, Hewlett Packard Enterprise & Andrés Vega, ControlPlane

The SPIFFE/SPIRE projects have had several exciting developments in the last year, all contributing to the goal of providing cryptographically strong workload identity and enabling Zero Trust. Adoption of SPIFFE/SPIRE continues to grow, as does the ecosystem of integrations.

Late in 2022, SPIFFE/SPIRE achieved CNCF Graduated status which is a strong sign of maturity. TPM integration continues to develop, supporting new use cases such as Proof of Residency challenges and implementation in IoT/offline environments. SPIRE is supported by Istio as a certificate provider, and also in Solo.io’s Gloo Fabric and AWS App Mesh. SPIRE can now verify containers signed using SIGSTORE, before granting a SPIFFE ID which allows for GCP-style Binary Authorisation in all environments where SPIFFE/SPIRE is implemented.

Official Helm charts have been developed for installing SPIFFE, and Windows support is being actively worked on. Credential composers is another feature for enabling more flexible integrations with service providers, as it allows for mutation of token & certificate fields so SVIDs can be used when authenticating to SPs with specific field requirements. Work is in progress on alternative tokens to JWT to address some of the limits with that token type, as well as more flexible federation between trust domains and confidential computing use cases.

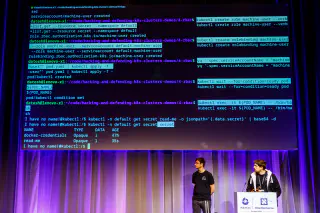

Hacking and Defending Kubernetes Clusters: We’ll Do It LIVE!!! - Fabian Kammel & James Cleverley-Prance, ControlPlane

Fabian and James demonstrated their hacking chops to give a fantastic introduction to how Kubernetes clusters can be exploited, and some best practices to defend against these common attacks. The scenarios followed the steps an attacker would take, beginning with Discovery / Initial Access and finishing up with a fiendish use of some exposed Docker Hub credentials to poison an image to have one of their own containers deployed in the target environment.

Along the way, Fabian and James scanned ports, harvested credentials, enumerated permissions, escalated privilege and got unauthorised access to all manner of systems and services. Among the key takeaways were always use least privilege, enforce Pod Security Standards, restrict access to the Kubernetes API server at a network level and always pin to a specific hash whenever referencing a container image tag. More advanced recommendations included implementing signing and verifying of containers, and using short-lived secrets with regular rotation.

Frequent threat modelling was encouraged throughout, and attendees left the talk with the exhortation to “exploit your cluster before anyone else does” ringing in their ears. In fact, the talk was so popular that Fabian and James were asked to repeat their talk!

What Can Go Wrong When You Trust Nobody? Threat Modeling Zero Trust - James Callaghan & Ric Featherstone, ControlPlane

Beginning with a simple example architecture with two workloads needing to talk to each other and a cloud provider API, James and Ric ran through the first three of the four questions of threat modelling (What are we building? What could go wrong? What can we do about it? How are we doing?) with a focus on Zero Trust for the workloads.

A detailed Data Flow Diagram was created for this architecture, to which the STRIDE methodology was applied to identify some key threats. When devising controls to mitigate these threats, James showed how cryptographically strong workload identity enables many of the necessary countermeasures such as mTLS for encryption-in-transit, granular layer 7 network authorisation and preventing workload spoofing. After updating the architecture to include these controls, Ric demoed a fully fledged implementation using KinD which worked, despite an AWS change the night before the talk requiring some last minute configuration fixes!

However, it’s not enough to threat model the architecture once, the updated architecture now needs to be analysed from a security perspective. Focusing in on the threat of an attacker overwriting the OPA policy bundle, another DFD was shown and an attack tree was drawn up detailing the steps necessary for the threat to be realised. This threat was mitigated by using KMS to verify the policy bundle signature via a Custom Bundle Signer and Verifier for OPA which Ric and James had to implement themselves. The talk finished up with a final demo of the hardened architecture, with only certain requests from the workload being allowed due to the custom authorization policy.

Related blogs

Validating Zero Trust: Network Policy Testing with Flux CD and Netassert

Defusing CanisterWorm: How Bun and Deno Secure the JavaScript Supply Chain