Cloud Native and Kubernetes Security Predictions 2023

- CVEs continue to rampage and tear through the supply chain

- Kubernetes RBAC and security complexity continues to intensify

- Passwords and credentials will continue to be stolen as zero trust is slow to be adopted

- AI and ML will be harnessed by attackers more effectively than defenders

- Automated defensive remediation will continue to grow slowly

- eBPF technology powers all new connectivity, security, and observability projects

- Closed-source vendors face calls for SBOM delivery to derive mean time to remediation statistics

- Vulnerability Exploitability Exchange format (VEX) sees initial adoption

- Cybersecurity insurance policies will increasingly descope ransomware and negligence as governments increase fines

- Confidential computing starts to be put through high-throughput test cases

- Linux Kernel ships its first Rust module

- Server-side webassembly tooling starts to proliferate after Docker’s alpha driver

- New legislation will continue to force standards that risk lack of real-world adoption or test

- CISOs will shoulder unjust legal responsibility and the talent shortage will be exacerbated because of it

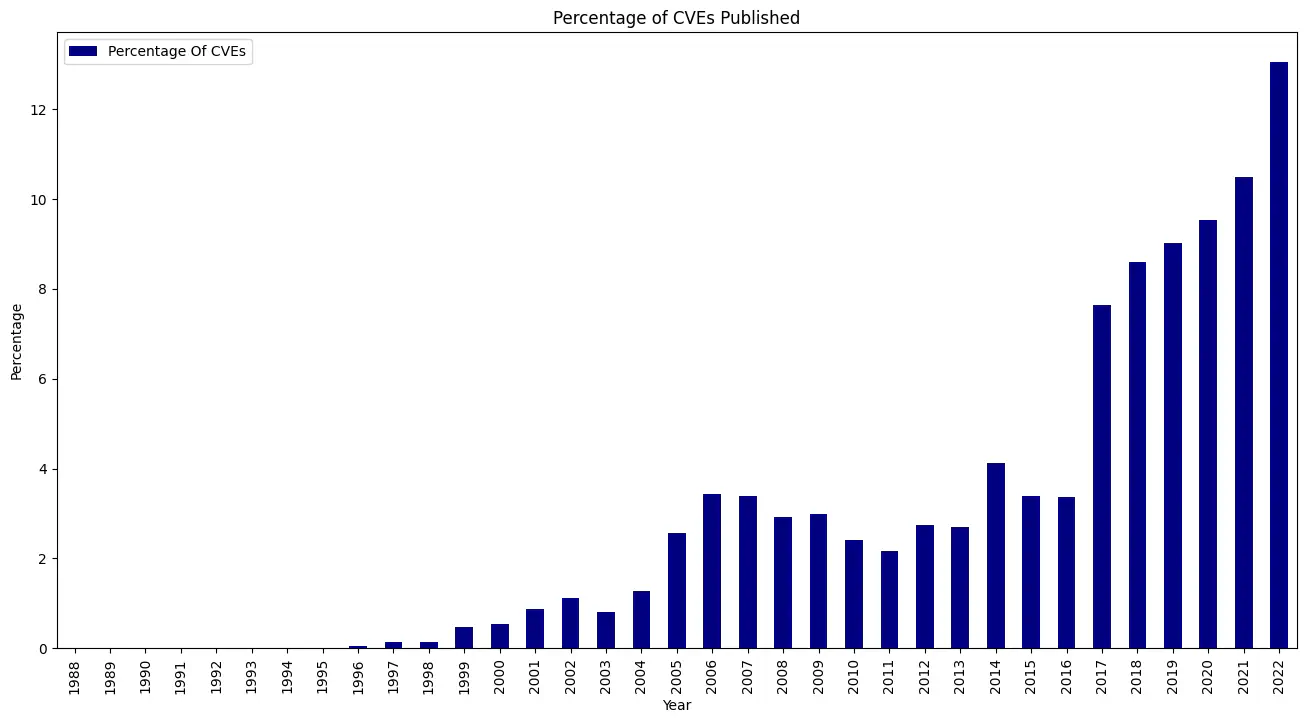

CVEs continue to rampage and tear through the supply chain

Year-on-year growth of CVEs is rising dramatically. Either through enhanced scrutiny, the proliferation of software, or the natural interaction of the two, this is coupled with supply chain attacks growing exorbitantly.

Targeted attacks against specific consumers are now common, with recent examples such as the PyTorch attack over the Christmas period. OSS package repositories such as PyPI and NPM are widely consumed but rarely scrutinised, which has enabled new vectors of embedding CVEs en-masse, coupled with traditional methods such as typosquatting as documented in TAG Security’s Catalog of Supply Chain Compromises.

Vulnerability researchers now have more ways to monetize their professional curiosity than ever before, thanks to the acceptance of penetration testing and the rise of bug bounties and platforms like HackerOne.

One of the main problems with understanding the impact of a CVE on your system is its exploitability. Potentially vulnerable code paths in dependencies may not be reachable, process controls may prevent hostile code paths or process behaviours from executing, or preventative security or platform tools may detect and isolate the exploit as it is fired. Tools such as SemGrep Supply Chain, Snyk Priority Score, and CodeQL specialise in this form of executable interrogation, determining whether a specific vulnerability is reachable and thus if the consuming application is at risk of compromise.

ControlPlane has been researching, remediating, and proactively defending against supply chain attacks since 2017, and will continue to work with industry and governments around the world to protect critical infrastructure and information.

Kubernetes RBAC and security complexity continues to intensify

As the internal Kubernetes API continues to be extended, and applications build out functionality using CRDs, the operational complexity of running Kubernetes will continue to rise. The emerging Platform Engineering mentality looks to create a layer atop Kubernetes with secure-by-default guard rails to enable developers to ship applications quickly and securely, at the cost of some developer flexibility. That flexibility is required for any non-trivial or regulated system, for example high-throughput trading platforms, telephony, and healthcare, that often have non-standard requirements and complex authorisation interconnectivity.

Observability, intrusion detection, and security incident monitoring are key for critical systems. More widely, the new Pod Security Standards make default admission control stronger, and are less complex than the previous Pod Security Policy, but do not address the sprawling RBAC roles that are often overly permissive – especially for high-privileged operators.

ControlPlane’s BadRobot operator security audit tool statically analyses manifests for high risk configurations and can be used to alleviate some of these concerns. More widely, threat modelling and low-level design are needed to ensure that application requirements that require exemptions from standardised Kubernetes platforms are secure-by-design.

Passwords and credentials will continue to be stolen as zero trust is slow to be adopted

There are two potential vectors to data exfiltration: through outsider attacks; or via insider threats. A constant in both cases is the risk of compromised credentials, which are then used to gain access to the network and systems with elevated privileges over sensitive data. Tightly scoped credentials with a short time-to-live mitigate a large range of attacks, and the adoption of a zero trust security model can help to minimise the risk of key and data exfiltration — a rare single control that significantly increases system resistance.

To support this effort, SPIFFEhas existed for a number of years and has just graduated as a CNCF project, yet adoption continues to be slow due to the structural and fundamental nature of the software within a complex distributed system. As the newest generation of systems are built and deployed, SPIFFE, and its reference SPIRE implementation, are the obvious choice as the foundation of a zero trust framework to consume short-lived cryptographically verifiable identities as a function of the platform.

AI and ML will be harnessed by attackers more effectively than defenders

Automated attack simulation has been growing in potential since DARPA’s 2016 Cyber Grand Challenge, a competition for automated binary analysis and exploitation.

While we see Deepfakes infect the information landscape, China passing laws requiring watermarking of all AI-generated content, and universities banning the use of AI-assisted writing, the greater threat is as yet unrealised. AI tooling that is able to effectively iterate through known implants and exploit payloads, chaining permutations together in novel or untested ways, may wreak havoc on our systems and require greater detective capabilities.

Moving forward, the spiritual or literal successors to ChatGPT 3 are expected to be able to generate weaponized payloads for existing vulnerabilities, and eventually novel exploits for new code.

Automated defensive remediation will continue to grow slowly

The foil to the harbinger of AI doom is automated remediation: attackers think in graphs and need only to find a single entrypoint to a secure system, whereas defenders must emphatically protect all aspects of their system with a checklist-based approach.

Into this threat landscape comes the potential for defenders to proactively probe their defences with the same automation that attackers are harnessing, combined with automated remediation: isolating breached workloads, blackhole-ing compromised routes, revoking compromised key material

The risks inherent in these approaches are false negatives resulting in production downtime, so they are not yet widely seen. However, the reduction in risk possible with companion AI systems may make this a viable approach over the coming years.

eBPF technology powers all new connectivity, security, and observability projects

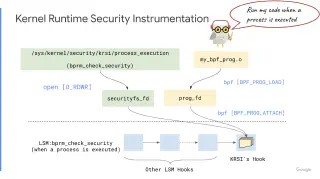

eBPF (extended Berkeley Packet Filter) is a technology that has been increasingly used in recent years to enhance the performance, security, and observability of various Linux-based systems. This technology allows for the creation of in-kernel programs that can be used for a variety of purposes such as packet filtering, system call tracing, and security enforcement.

One of the most notable features of eBPF is its support for high-speed packet processing through the eXpress Data Path (XDP) program, which can offload network functions to smart network cards for acceleration. Additionally, ongoing work on the Kernel Runtime Security Instrumentation (KRSI) eBPF-LSM (Linux Security Module) closes the gap between asynchronous observability and in-process system call blocking. It provides a consistent interface for security enforcement, making it possible for new CNCF projects like KubeArmor to leverage eBPF to enhance application security, and obviating the need for consumers to write eBPF applications.

While eBPF has many advantages, the Kernel is undergoing constant change and not without its risks. The bytecode triple transpilation is not foolproof, and its trust domains can be used to inject arbitrary code into the kernel and escalate. Additionally, the Linux capability requirements for eBPF (CAP_PERFMON, CAP_BPF) are common prerequisites for container breakouts. It is important for consumers to be aware of these risks and for eBPF to be abstracted and commoditized to make it safer for adoption.

Closed-source vendors face calls for SBOM delivery to derive mean time to remediation statistics

SBOMs for cloud provider APIs and SaaS applications may initially appear to have little utility, but they reveal the mean time to mitigation and patching for the organisations behind them. This offers insight into an otherwise inscrutable build process and can give an indicator of confidence in software producers.

The software may run in sensitive locations, and a remotely exploitable vulnerability may grant an attacker access to the rest of the system, and so closed-source software SBOMs are particularly important to provide introspection into application code — considering the alternative is to trust a vendor that un-verifiably self-attests their product is “secure”.

Vulnerability Exploitability Exchange format (VEX) sees initial adoption

While CVEs continue to muddy the waters of vulnerability remediation with unexploitable false positives, one of the hardest jobs in security is analysing those critical warnings. A platform executing millions of transactions a day is likely to have a tangible business cost to downtime, and the decision to halt a release due to a vulnerability in new software must be weighed against business availability and feature delivery.

To address this, there is a potential solution in the Vulnerability Exploitability Exchange format (VEX). This is a companion document to the National Vulnerability Database (NVD), Software Bills of Materials (SBOMs), and vulnerability scanners. VEX brings transparency to vulnerability exploitability, detailing whether a software package that bundles a vulnerable dependency is actually affected by that vulnerability. For example, if a web application has a remotely exploitable build-time dependency that is not packaged and distributed with the final container image, and the build infrastructure does not connect to a network or expose the vulnerability, the build is unexploitable and the code is safe to run.

Many CVE scanning approaches do not take this nuance into account, giving vulnerability assessors in every organisation the same, duplicate responsibility. Similar to SBOM maintenance, initial unwillingness to adopt VEX seems to stem from a resourcing, time or dependency debt (overhead of keeping VEX doc up to date etc), rather than a logical or technical debt. When distributed and signed by a trusted entity such as the vendor or reputable security researchers, VEX can relieve this burden.

Cybersecurity insurance policies will increasingly descope ransomware and negligence as governments increase fines

The cost of a breach has increased year on year as technology as cloud usage increases in complexity, attackers gain sophistication, and regulatory penalties become more stringent. According to IBM figures, the average cost of a breach is around USD$4.35 million, and insurance companies are increasingly removing coverage from their policies for certain types of incidents, such as ransomware and negligence.

Coupled with increased policy premiums and deductibles, general cloud compromises and supply chain attacks may lose cover as the cost of data breaches increases in line with the value of disruption caused to schools, governments, and industry.

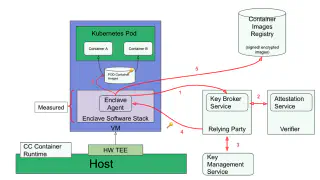

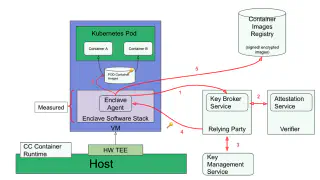

Confidential computing starts to be put through high-throughput test cases

Confidential computing is an emerging technology that looks to address the security challenges associated with the processing of sensitive data in cloud environments. It uses hardware-based security features to secure data during computation, as opposed to in transit or at rest, by using encrypted memory and secure processing enclaves to encrypt data during processing. Trusted hardware components, such as Trusted Execution Environments or enclaves, are a crucial component of confidential computing as compromised host systems can potentially leak data to an observer on the host. Confidential Containers is a recent Kubernetes-based implementation using Kata Containers to separate guest applications from the underlying cloud infrastructure.

Existing confidential computing implementations are focused on isolating data, with use cases designed to protect against compromised host systems. Long-term goals such as homomorphic encryption, where data is processed in an encrypted state, are also being researched. However, these technologies are still in their early stages and are not currently practical due to limitations in the instruction set, resulting in slower execution of individual instructions and longer processing times.

Linux Kernel ships its first Rust module

Rust is a programming language that has been gaining popularity due to its focus on safety and performance. Despite scant initial support for Rust in the Linux kernel currently, the inclusion of a device driver written in Rust is a significant step towards a new era for the kernel, starting to assuage the risk of memory-safety bugs that memory-unsafe languages like C are at risk of. Rust’s placement as a memory-managed systems language distinguishes it — as long as “unsafe” mode isn’t used to bypass memory safety guarantees — and its developer community expects to see more Rust modules in the kernel in the future.

Those sceptical of Rust’s claims to Kernel contribution have quietened, and as the Kernel refuses to break backward compatibility, any Rust code merged in would need rewriting in C to be replaced.

This is the inertial start of a change that may take many years to come to effective fruition in terms of security benefits but heralds the dawn of a new era for both the kernel and Rust

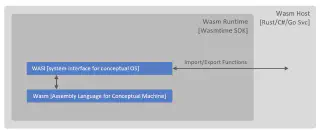

Server-side webassembly tooling starts to proliferate after Docker’s alpha driver

WebAssembly (WASM), a binary format for executable code, has recently been added as a plugin to Docker’s runc engine. It’s already matured with in-browser implementations allowing complex applications to be built in C++, Golang, or Rust, or 40 other languages, and transpiled into a format that can be executed by the browser in its WASM virtual machine.

Server-side WASM is unlikely to replace containers this year. Its core and APIs are still under active development and the runtime suffers the same lack of developer-friendliness as unikernels: there’s very little opportunity to debug such lean runtimes. However distroless container approaches have helped developers understand cross-namespace debugging, so there is some hope that WASM use will expand.

WASM reduces a container’s attack surface as it supports bundling application dependencies in the binary, reducing the ability for remote code execution attacks that require some runtime to execute their payload. Additionally, capability-based security (distinct from Linux capabilities), cross-platform portability, and a least-privilege sandboxing model give server-side WASM an interesting potential future.

Its uptake will be dictated by the ease of use for developers already uncomfortable with the explosion of tooling and abstractions they have to deal with for containers. Tools likeDeislabs’ containerd-wasm-shims already provide containerd shims for running WASM on Kubernetes.

New legislation will continue to force standards that risk lack of real-world adoption or test

Recent legislation, such as the Biden ordinance in response to the uptick in supply chain attacks, has made good technical sense. However, further legislative proposals have left the industry reeling: the mandate for zero vulnerabilities in software sits at odds with the desire to run recent versions of applications and libraries.

The Continuous delivery software pattern promotes well-tested code and frequent releases, which ensures operator agility in case of urgent patches, a fact of life for any large organisation. Proving that applications are entirely free of vulnerabilities, or that the associated CVEs are unreachable, is a laborious task. The centralization of CVE and VEX remediation efforts may be a more effective use of the person-hours than the “zero vulnerability” approach will require.

OpenUK continues to work with the international government and industry to advocate for technically-aligned open source policy. The upcoming State of Open Con 2023 features speakers across the open source community with a focus on: government, law and policy; security; platform engineering; and open data.

CISOs will shoulder unjust legal responsibility and the talent shortage will be exacerbated because of it

Security is difficult, and removing the incentive for intelligent individuals to lead their departments by belabouring them with onerous legislation and responsibility is not commensurate with their already challenging work.

The Uber CISO, Joe Sullivan, was sacrificed to the authorities for concealing a breach and paying the hacker “hush money” — he now faces up to 8 years in prison. Were this level of social responsibility applied to those that cause material harm to the financial system, and thus the poorest in society, the actions of boardrooms across the world may be very different.

Related blogs

Tampered Tokenizers: An AI Supply Chain Meltdown

The End of Safe Software? No, It's Not.