Validating Zero Trust: Network Policy Testing with Flux CD and Netassert

GitOps revolutionised how we deliver applications, enabling faster deployments and managing infrastructure with targeted declarative precision. However, this precision doesn’t extend to securing dynamic environments and remains incredibly difficult.

Consider a historical three-tier application architecture: a frontend web service, backend API, and data store. The engineer’s accountability ended when the application code was pushed to version control, and automation carried it to production (that developers may not be permitted to access). Today, that paradigm has fundamentally shifted: engineering teams can no longer just ship code; they must engineer for production.

The same evolution transpired in modern software packaging. A microservice repository now includes deployment manifests and Helm charts with configurations for Staging, UAT, and Production. Engineering teams have been DevOpsed: they own not just the application code but the cross-environment configuration and delivery mechanism as well.

Expanded Engineering Mandates: Security as Code

Secure production environments already statically analyse secure runtime configuration and report on application CVEs. With Kubernetes’ declarative inter-pod firewalls (called Network Policies in the API), this shifting operational mindset extends to the security of the software-defined network. It is no longer viable for a disconnected security team to retroactively guess and apply firewall rules with days or weeks of latency.

Instead, software engineers and architects, who possess the deepest understanding of application topology, are best equipped to define SDN policy for the microservice topologies they create. Security teams empower this shift by providing support and education on the importance of implementing Network Policies and auditing for consistency.

A lack of empirical testing allows for misconfiguration and human error, which drives the majority of cloud security breaches. We can no longer rely on the hope that our YAML is correct; instead, we need empirical proof that policies are working.

And so another development practice transcends operations into security and DevSecOps: just as we write End-to-End (E2E) tests to verify application logic, we must extend the same rigour of E2E testing to validating network connectivity and isolation.

The Illusion of YAML Security and the CNI Reality

Zero Trust architectures enforce defence in depth, validating inter-service communication with L7 mutual trust and metadata heuristics. These architectures should also configure SDN firewalling in case of breach, but Kubernetes Network Policy’s union-set configuration is notoriously complex. A single misconfigured label selector or omitted ingress rule can silently negate the network’s security posture.

Standard CI/CD pipelines typically only lint YAML for syntax errors, verifying that policies are structurally valid on paper, but not how the network actually behaves once deployed.

More importantly, there is a fundamental architectural risk. Kubernetes simply stores these policies in the API; it relies entirely on the Container Network Interface (CNI) to enforce them. If your CNI is missing, misconfigured, or malfunctioning, Kubernetes will still accept the YAML without throwing an error. The policies will appear perfectly valid, but in reality, traffic may flow entirely unrestricted, silently ignoring your security intent.

A declarative YAML file is just a statement of intent. It is not proof of security.

The Architecture: Moving from Intent to Reality

To truly secure modern microservices, organisations must adopt Continuous Verification. This means shifting network security left by integrating post-deployment E2E testing directly into the delivery pipeline.

Our proposed zero trust architecture provides a robust, automated framework for moving from intent to verified reality.

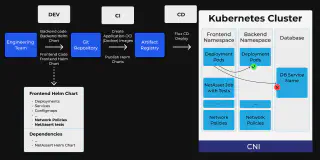

Figure 1

Here is how the flow works, using our frontend application example:

1. Test-Driven GitOps Foundation (Dev and Git)

The Engineering Team writes the code for all microservices, including the frontend and the backend, along with their respective Helm charts. This example focuses on the ‘Frontend Helm Chart’, which packages the standard Kubernetes manifests, the Network Policies, and the specific NetAssert test configurations. This same pattern applies to the frontend, backend, and every other microservice.

Crucially, to execute these tests, the chart declares the official NetAssert Helm chart as a dependency, and the NetAssert engine is pulled in as a subchart, integrating the testing framework directly into the application artifact.

Alternatively, teams can utilise a Helm umbrella chart that includes all individual microservices and Netassert as subcharts for holistic environment validation.

2. Continuous Integration and Artifact Creation (CI)

The CI pipeline monitors Git. It creates the application OCI images and publishes the combined Helm charts to the Artifact Registry.

3. Declarative Continuous Deployment (CD / Flux CD)

As a robust, enterprise-grade GitOps agent, Flux CD sits inside the cluster and handles the Continuous Deployment phase. It continuously monitors the Artifact Registry for new versions, leveraging Helm’s ability to distribute charts as OCI artifacts, allowing them to be hosted in the same registries as your container images for consistent distribution, validation, and signing.

When an update is detected, Flux CD natively manages the Helm release process. Pulling the artifact and systematically reconciling the cluster state and deploying the Pods, Services, and Network Policies to match the Git repository exactly.

Pro tip: Adopting a Gitless GitOps architecture further enhances Continuous Verification, letting you pull OCI artifacts without requiring Git credentials in your cluster and enabling native signature verification. This is a powerful way to streamline supply chain security without adding operational overhead.

4. Continuous Verification in Action (Within the Cluster)

The embedded NetAssert configuration is triggered once the pods are deployed. NetAssert actively probes the network by injecting ephemeral containers that use standard network sockets to simulate traffic exactly where the applications reside, operating completely transparently to the underlying CNI to verify that applied policies are consistent with the CNI configuration. Following Figure 1:

- It confirms the ‘Frontend Pod’ can talk to the Backend Pod (green check)

- It confirms the ‘Frontend Pod’ cannot talk to the Database Pod (red cross)

5. The Automated Success or Failure Gate

NetAssert is executed as a Kubernetes Job tied to a Helm post-deployment hook, creating an automated feedback loop. If a network probe fails due to a policy misconfiguration or a failing CNI, the Kubernetes Job fails.

That failure causes the Helm post-deployment hook to fail. Flux CD intercepts this signal and immediately marks the entire release as failed. The deployment process is halted, alerting the engineering team and preventing a compromised state.

The Business Imperative

For Engineering and Compliance Directors, the value extends beyond catching misconfigurations. Automating network policy testing reduces friction between engineering and security teams: engineers can move faster with confidence, knowing they won’t breach security boundaries, and security teams can significantly reduce operational costs and time associated with regulatory audits, knowing that every deployment is empirically proven to enforce its defined policies.

Much like the industry standard of pursuing comprehensive unit test coverage, organisations must now establish a baseline for network policy test coverage. While teams must continue to ensure comprehensive coverage to avoid blind spots, especially for negative paths, this automation guarantees that the security rules you do test are actually functioning in production.

Ready to Shift-Left Your Network Security?

In 2026, relying on static configurations to protect dynamic environments is a risk your business can no longer afford. Stop hoping your network is secure and start proving it.

- GitOps/Infrastructure Upgrades: Need a robust foundation to automate this continuous-deployment workflow? Discover Enterprise for Flux CD.

- Open Source/Technical: Explore the open source Netassert GitHub Repository. View the code, star the project, and start running E2E tests today.

- Consulting and Advanced Features: Contact our team to implement Continuous Verification in a complex environment with Netassert Enterprise, which offers native Service Mesh support.

Related blogs

How LLMs Are Ending The Attacker-Defender Stalemate (And What to Do About It)

FluxCon Atlanta Was Just the Start