The Vercel Breach: When Roblox Cheats, AI Tools, and Poor Secrets Management Collide

The Vercel Breach: When Roblox Cheats, AI Tools, and Poor Secrets Management Collide

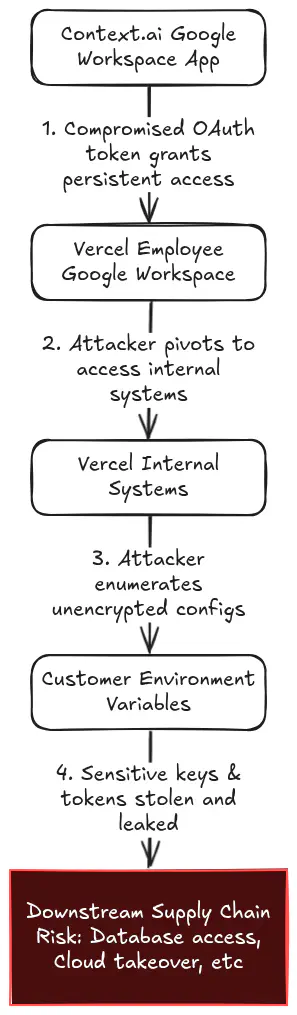

The recent breach at Vercel is a textbook example of how many modern supply-chain compromises unfold. It didn’t start with a sophisticated zero-day attack against Vercel’s core infrastructure. Instead, the breach began months earlier, when an employee at Context.ai, a third-party AI tool vendor, downloaded a Roblox auto-farm cheat script onto a corporate laptop. Bundled inside was the Lumma infostealer.

That single, mundane compromise exfiltrated Google Workspace credentials and OAuth tokens. By leveraging those stolen “Allow All” OAuth permissions, attackers pivoted directly into Vercel’s cloud environment. The ultimate impact: unfettered access to environment variables that, while encrypted at rest, were retrievable in plaintext via the API by any authenticated user or token.

The infection vector makes for a sensational headline, but the mechanics of the subsequent pivot reveal something more troubling: the danger of relying on fragmented, per-platform secret inputs rather than centralised secrets management.

The Open Source Answer to Secrets Management

The blast radius of this incident was defined entirely by how environment variables were accessed. Vercel noted that variables explicitly marked as ‘sensitive’ were protected from being read back via the UI or API, but the exposure of standard, retrievable variables handed attackers exactly the reconnaissance data they needed to map and escalate their operation.

Centralised secrets management would have solved this. A persistent misconception the industry holds is that robust secrets management requires prohibitive enterprise licensing. While some commercial solutions are undeniably expensive, free, open-source platforms like OpenBao remove the excuse for relying on developers to remember to check an opt-in ‘sensitive’ box by providing:

- Automated protection: Environment variables and tokens can be dynamically generated and encrypted at rest

- Enforced rotation: Tokens are regularly and automatically rotated to minimise the window of opportunity for any compromised credentials

- Cost-effective resilience: Enterprise-grade security and secret lifecycle management without the price tag

The Golden Rule: Humans Write, Workloads Read

In our daily consulting engagements, we frequently encounter organisations struggling with secret sprawl and overly permissive access.

Our foundational recommendation is simple: humans should be allowed to write secrets, but must never be permitted to read them.

Read access to infrastructure secrets belongs exclusively to authenticated, strictly authorised workloads by default. When an employee’s machine is compromised by an infostealer, the attacker gains access to human-linked tokens. If the system architecture enforces that those tokens are systematically barred from reading underlying configuration values, the compromise hits a dead end.

Vercel does provide a ‘Sensitive’ flag that implements this concept, but this incident proves that optional security is inherently flawed. If security relies on developers manually toggling a setting for every critical configuration, failures are inevitable.

The AI Security Paradox

This incident also serves as a stark reminder of the industry’s current misalignment in priorities. AI companies are aggressively selling the idea that artificial intelligence will soon automate cybersecurity professionals out of a job. Agentic AI models are scanning codebases around the clock, hunting for vulnerabilities at a scale no human team could match, and they’re finding them.

However, the Vercel breach wasn’t stopped by any of that. Instead, a Roblox cheat and seconds of careless OAuth consent did more damage than any sophisticated exploit. It occurred because human convenience was prioritized over security measures. No amount of automated vulnerability scanning catches an employee downloading a cheat script on a corporate laptop.

Poorly integrated AI tools are giving attackers new front doors into the modern Cloud Native stack. We need to stop treating AI as a security panacea and start treating it as the highly privileged, high-risk attack vector it has proven itself to be.

Ultimately, the Vercel breach reiterates that while Agentic AI models can be implemented to solve security problems, the ‘human in the loop’ remains a fragile integration point. The real lesson here isn’t about Roblox cheats and infostealers, but the danger of overly privileged permissions and a lack of secure-by-default environment variable system. Resilience lies in using a centralised secrets management system and giving least privilege to your third party integrations.

Reach out to the ControlPlane team to ensure your secrets and workloads are resilient against the next supply-chain compromise. Whether you’re navigating the complexities of AI toolchain security or looking for a hardened production-ready secrets strategy, we offer Enterprise support for OpenBao to help you achieve zero-trust maturity without the vendor lock-in.

We can help secure your AI systems.

Related blogs

ControlPlane Enterprise for OpenBao - Meet the Team

ControlPlane Launches Enterprise Support For OpenBao To Strengthen Secrets Security